With $13B in fresh funding, Anthropic is safe - at least until OpenAI finishes “reorganizing” Statsig.

HOT TAKE

The Tabular Shift

Chatting with Nikolaos in this week's podcast got me thinking: XGBoost isn’t dead yet - but its days as the tabular data king are numbered.

Are you still using it, or have you switched to models like TabPFN, FT-Transformers, or SAINT?

PRESENTED WITH JFROG

Coming up: MLOps Days London

We’re teaming up with JFrog for the next MLOps Days - this time in London on Thursday, September 18. Expect sharp talks, good conversation, and the usual mix of practitioners sharing what actually works in production.

The lineup is strong: Heiko Hotz (Google), Yuval Fernbach (JFrog), and Michelle Conway (Lloyds Banking Group) are on deck, covering everything from prompt automation for voice assistants to securing ML systems and getting GenAI live in financial services.

Spots are limited, so register now.

Curated finds to help you stay ahead

Closing the agentic loop with MCP, showing how autonomous agents iterate, verify, and complete tasks end-to-end across coding, data, and operational workflows.

The Infra Stack Reset highlights 10 key trends reshaping infrastructure spend in 2025, signaling a shift toward leaner, more economical platforms amid tightening budgets.

Text-to-speech model benchmarks from Coval, with a live comparison interface for testing latency and accuracy to help developers refine voice agent performance.

Inside vLLM explores how a high-throughput LLM inference engine is built, covering design, advanced features, scaling strategies, and distributed serving infrastructure.

MLOPS COMMUNITY

Distilling 200+ Hours of NeurIPS: What’s Next for AI

XGBoost’s crown is wobbling. NeurIPS signals the first tabular models that edge it out - not cheap yet, but real - plus a push toward LMs trained on actual databases.

Tabular shift: early wins over XGBoost on tables, with cost tradeoffs, and new efforts to build public tabular corpora for pretraining.

Agents with brakes: useful for decision support, not execution - reliability comes from external verifiers and knowledge graphs.

Frontier still leads: despite small-model buzz, top systems remain ahead; RL-style reasoning continues to improve them.

Bottom line: practical gains arrive when speed meets safeguards.

The Era of AI Agents in Marketing

Agents now run the SDR playbook at machine speed - spotting intent, drafting founder-voice notes, and spinning up account-specific pages before humans step in.

Workflow: detect trials or job posts, crawl LinkedIn and public signals, craft multichannel outreach, route replies to a unified inbox, and ping AEs in Slack for handoff.

Measurement: UTMs show rising ChatGPT/Gemini referrals; replace brittle MQL/SQL rules with AI-qualified scoring from multi-signal context, plus LLM-assisted negative keyword pruning.

Result: agents handle the grind, humans build trust - response rates climb.

Securing AI Agents: The Future of MCP Authentication & Authorization

When your AI agent can pull sensitive Salesforce or Workday data, security isn’t optional - it’s urgent.

Why the naive “one admin key for everything” approach collapses the moment you scale

How OAuth 2.1 with token exchange creates user-scoped, auditable access without storing credentials

The shift toward Proof-of-Possession tokens to stop stolen keys from being reused

This architecture isn’t just theory - it’s becoming the enterprise standard for secure, scalable AI agent deployments.

IN PERSON EVENTS

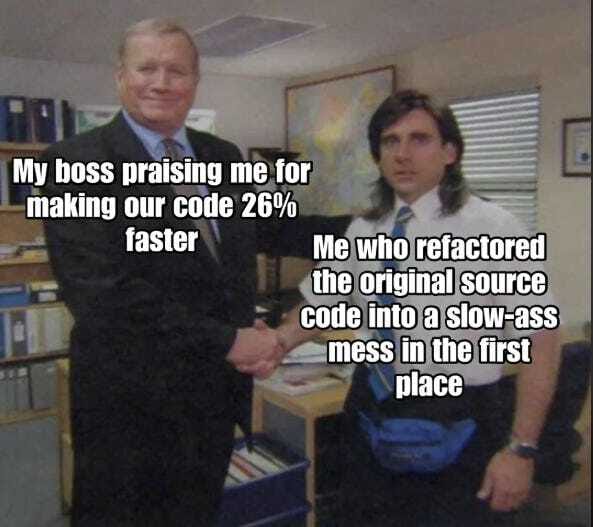

MEME OF THE WEEK

Job of the week

Senior GenAI Machine Learning Engineers, LLM Products, Applied AI // Databricks (San Francisco, US)

Databricks is hiring senior GenAI machine learning engineers to advance its applied AI products, including the Databricks Assistant and AI/BI Genie. The role focuses on improving LLM performance and expanding GenAI capabilities across their platform in 2025.

Responsibilities

Develop and deploy LLM-based features across Databricks products

Design and optimize ML pipelines for rapid experimentation

Collaborate with research and engineering teams on applied AI projects

Build scalable backend systems supporting GenAI applications

Requirements

2-8 years in machine learning engineering or academic research

Expertise in Python, PyTorch/TensorFlow, and scalable ML systems

Experience with LLM fine-tuning and prompt optimization

Strong skills in model deployment, testing, and monitoring

Find more roles on our new jobs board - and if you want to post a role, get in touch.

ML CONFESSIONS

Fixed by Proxy

Pushed a config change without double-checking the params and broke part of the pipeline. Next standup, the infra lead asks, ‘Anyone been in that section recently?’ I kept my camera off and stayed quiet. A senior engineer offers to check, spends an hour poking around, and announces it’s fixed. I sat there pretending to take notes, heart rate finally dropping back to normal.

Share your confession here.