Following Astonomer's hiring of Gwyneth Paltrow, former CEO Andy Byron has hired his own temporary spokesperson. |

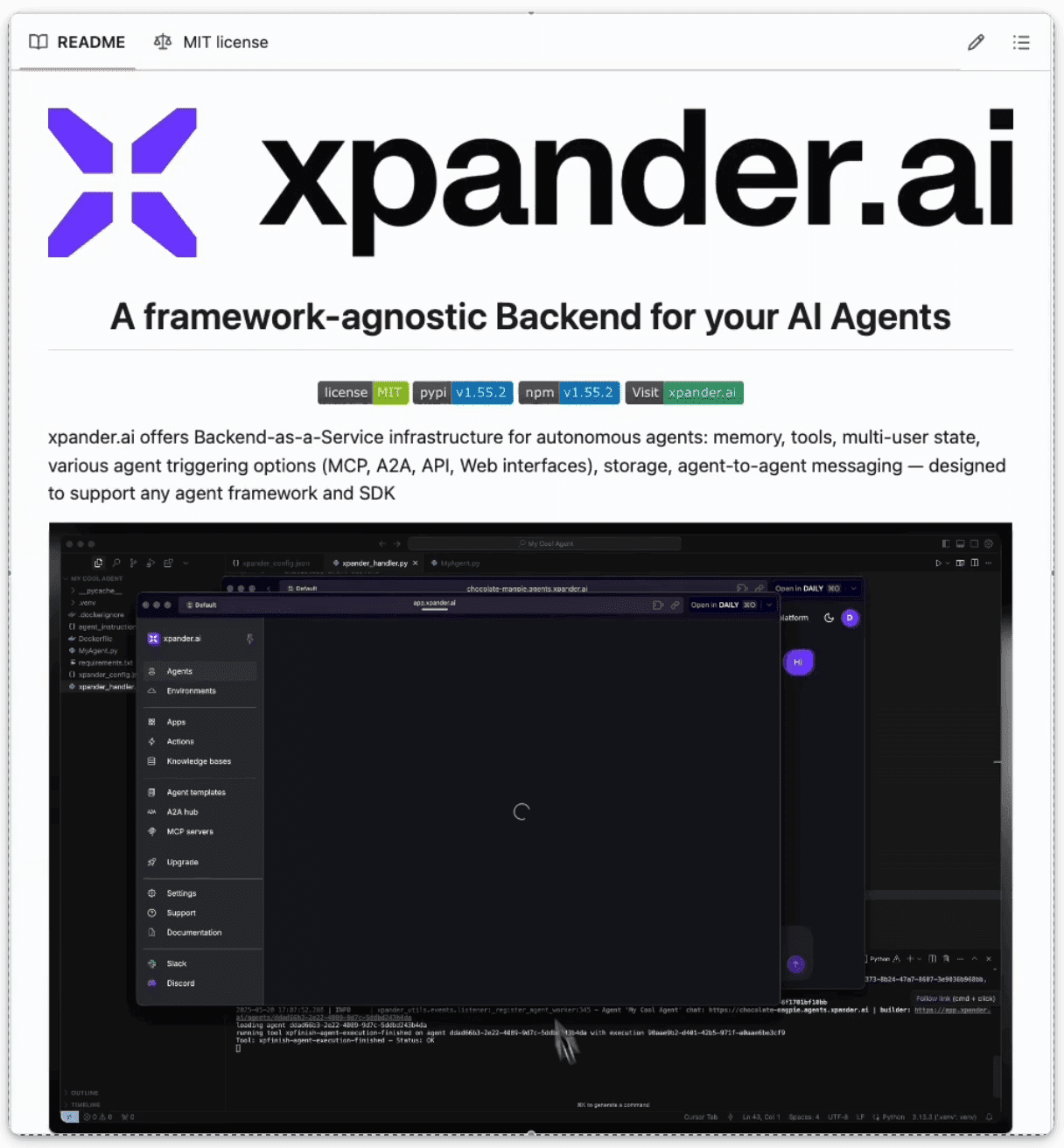

xpander.ai: One Way to Tackle the Agent Infrastructure Problem, by Rohit Ghumare

What You'll Learn Today: |

The Hidden Infrastructure Problem - Why 80% of AI agent work isn't the agent itself

xpander.ai Solution Overview - Backend-as-a-Service for production AI agents

Step-by-Step Implementation - From agent architecture to database setup

Complete Customer Service Agent - Working code with tools, memory, and event handling

Advanced Production Strategies - Multi-model support, database management, scaling

Framework Integration - Agno, Xpander SDK, and multiple AI model support

Production Deployment - CLI interface, event processing, and monitoring

Key Takeaways & Next Steps - Engineering insights and framework comparisons

Estimated Reading Time: 15-18 minutes

Implementation Time: 2-4 hours for basic setup, 1-2 days for production deployment

|

Three months ago, a startup with a team of five engineers was struggling to scale their AI customer service agent. The agent worked perfectly for single conversations, but when they tried to handle multiple users simultaneously, everything fell apart. Memory leaked between conversations, tools crashed under load, and state management became a nightmare.

Sound familiar? If you've ever tried to build production AI agents, you've probably hit the same wall.

The problem isn't your code, it's that building agent infrastructure is incredibly complex. You need memory management, tool orchestration, state persistence, multi-user isolation, event streaming, and guardrails. Most teams end up building all of this from scratch, which takes months and often breaks in production.

The Infrastructure Problem Nobody Talks About

Here's what most AI agent tutorials don't tell you: the agent itself is only 20% of the work. The other 80% is infrastructure: |

Memory Management: How do you persist conversation history across sessions?

Tool Integration: How do you safely execute external API calls and code?

Multi-User State: How do you isolate user data and prevent cross-contamination?

Event Streaming: How do you handle real-time updates and notifications?

Guardrails: How do you prevent agents from doing dangerous things?

Scaling: How do you handle thousands of concurrent conversations?

Most teams spend 6-12 months building this infrastructure before they can focus on their actual AI product.

One Way to Solve It: xpander.ai as a Backend Layer

There are a few emerging approaches to this problem. Some teams rely on general-purpose orchestration frameworks like LangChain or CrewAI. Others use cloud-native infrastructure patterns and assemble their own stack.

xpander.ai is one managed approach: it provides backend infrastructure for AI agents as a service. If you're looking for something pre-built, here's what it offers: |

Framework-agnostic integration (OpenAI, Anthropic, LangChain, CrewAI, etc.)

Persistent memory and conversation state

Tooling support with MCP compatibility

Multi-user state management with isolation

Real-time event streaming

Built-in guardrails and security

Agent-to-agent communication

This kind of setup can significantly cut down the time it takes to get a production-ready agent live - sometimes reducing months of work to just a few days, depending on your architecture and use case.

|

Step-by-Step: Setting Up xpander.ai for Production Agents

Let's build a customer service agent with xpander.ai and agno framework.

1. Define Your Agent Architecture

Before diving into code, plan your agent's capabilities: |

Core Agent Functions |

Conversation handling and memory persistence

Tool access and external API integration

User state management and personalization

Event handling and real-time updates

Infrastructure Requirements

Multi-user isolation and data security

Scalable hosting and load balancing

Monitoring and observability

Backup and disaster recovery

2. Install and Configure xpander SDK

Install the xpander SDK for your preferred language:

|

Initialize your xpander client following the actual project patterns: |

Python |

3. Implement Memory and State Management

Set up persistent memory using real Agno framework patterns: |

|

4. Integrate Tools and External APIs

Connect your agent to external services using real Agno tool patterns: |

|

5. Set Up Main Execution and Event Handling

Create the main execution files following your working project patterns: |

|

|

|

6. Configuration and Environment Setup

Create the required configuration files: |

|

|

|

|

|

7. Project Structure

Organize your files following the working project structure: |

|

Advanced Production Strategies

Multiple Model Support

Configure your agent to use different models based on requirements: |

|

Database Management

Implement proper database management following your project patterns: |

|

Docker Deployment

Create a Dockerfile following your project pattern: |

|

|

Production Deployment Checklist

Pre-Production Setup |

Configure environment variables in

.envSet up

xpander_config.jsonwith real credentialsTest all tools with

python main.pyInitialize database with proper permissions

Configure Slack integration (optional)

Security Configuration

Enable database encryption

Set up proper API key rotation

Configure rate limiting for tools

Implement input validation and sanitization

Set up audit logging

Monitoring and Observability

Configure error tracking and alerting

Set up performance monitoring

Implement customer satisfaction tracking

Monitor token usage and costs

Set up backup and recovery procedures

Scaling Considerations

Configure auto-scaling policies

Set up load balancing if needed

Implement caching strategies

Monitor database performance

Plan for traffic spikes

Follow the progress of the xpander.ai GitHub Repository to stay updated, and if you find it valuable, consider giving it a star ⭐️.

Framework Integrations:

OpenAI SDK Integration: Compatible with OpenAI's latest APIs

LangChain Support: Works with LangChain agent frameworks

CrewAI Integration: Supports CrewAI multi-agent workflows

Anthropic Claude: Compatible with Claude API

Google AI (Gemini): Works with Google's AI models

Motia: Unified Backend Framework: Modern backend for APIs, events, and AI agents

Production-Ready Features:

Database Management: SQLite with proper schema design

Error Handling: Comprehensive try/catch blocks

Event Processing: Real Xpander event integration

Tool Integration: Working @tool patterns with external APIs

Memory Management: Persistent conversation history

Multi-Model Support: Flexible model selection

Development Workflow:

Start with CLI: Use

python main.pyfor developmentTest Tools: Verify each @tool function works correctly

Deploy with Events: Use

python xpander_handler.pyfor productionMonitor Performance: Track metrics and user satisfaction

Scale Gradually: Add more tools and models as needed

|

Key Takeaways

For Engineering Teams: |

Infrastructure Dominates: Most of the work in AI agents lies in infrastructure - memory, orchestration, persistence, scaling - not just model prompts.

Faster Setup: Tools like xpander.ai can significantly reduce build time, especially for teams starting from scratch.

Production Considerations: Includes components like scaling, monitoring, and state isolation that are often missing in early-stage builds.

Framework Compatibility: Can integrate with OpenAI, Anthropic, LangChain, CrewAI, or custom implementations.

For Technical Leaders:

Cost Awareness: Model routing and caching strategies may help reduce operational costs depending on usage patterns.

Security Context: Guardrails, audit logging, and isolation features can support teams working in regulated or multi-user environments.

Focus and Velocity: Moving common infrastructure concerns into reusable components can free engineers to focus on business logic.

Scalability Options: Built-in autoscaling can help handle bursty workloads without additional orchestration.

Framework Integration:

Agno Framework: Agent orchestration and tool interface layer

Xpander SDK: Handles backend communication, event routing, and configuration

Model Flexibility: Tested with Claude Sonnet 4, GPT-4o, and others

Database Support: Uses SQLite with structured schema for persistent state

Next Steps:

Try running the examples in the xpander.ai repository to test local setup

Explore how your existing monitoring stack could plug into an agent deployment

Contribute issues, improvements, or feedback to the open-source project as you go

Agent infrastructure isn’t solved yet, but reusable infrastructure can take you a long way.