Bad news for anyone who thought ChatGPT was too polite, eh.

HOT TAKE

Data vs Instinct

Inspired by this short chat with Fabricio where he talked about testing 100 ideas and letting experiments win over the CEO’s gut.

When experiments and the CEO’s gut disagree, which wins in your org?

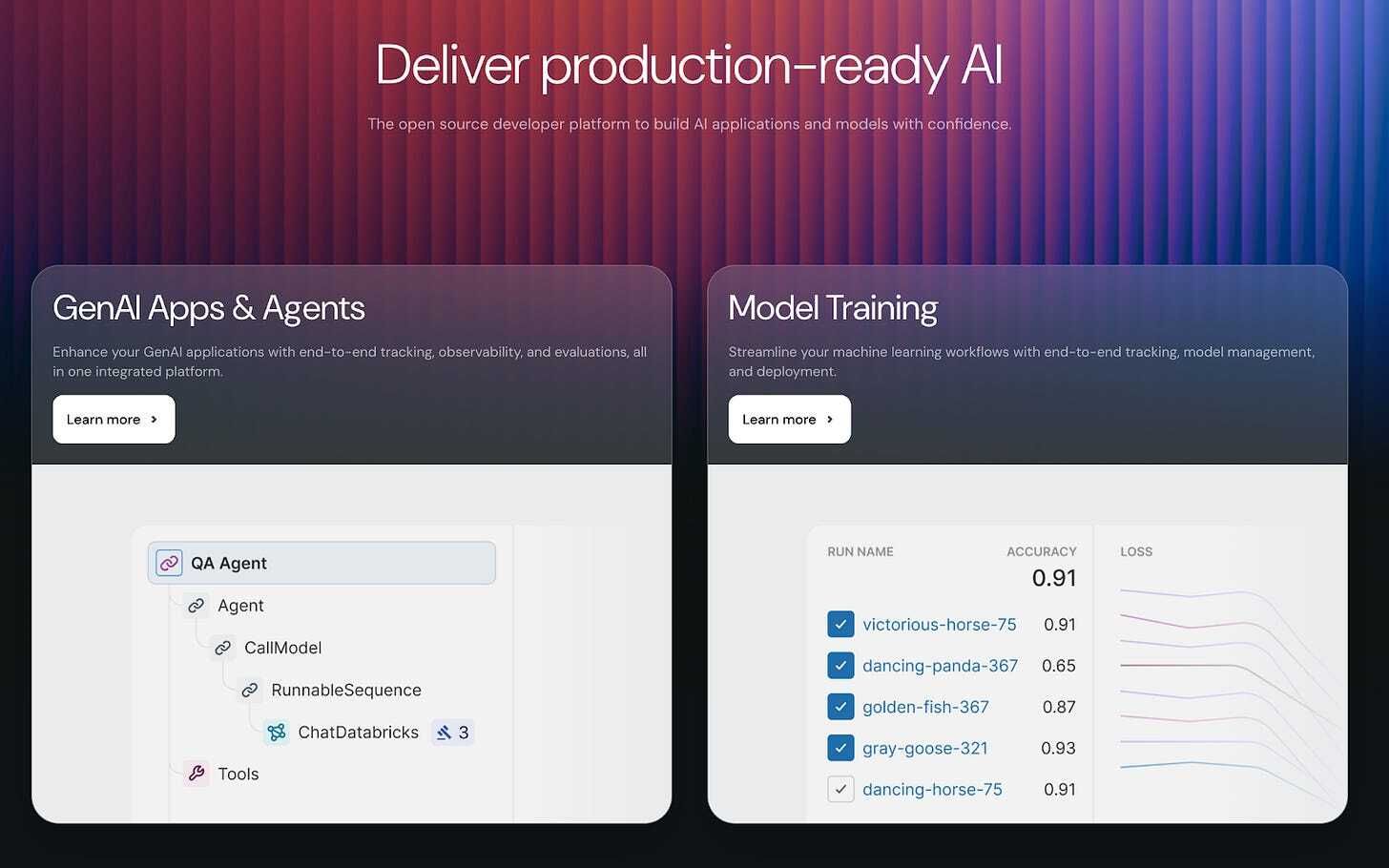

PRESENTED BY DATABRICKS

Managed MLflow: Production-Ready, Zero Setup

If you’re juggling LLMs, prompt versions, or debugging agent hallucinations, it’s time to check out MLflow.

This year, MLflow, the open source platform trusted by teams across the globe, launched MLflow 3.0 and a fully managed version, offering a solution to these common headaches. Managed MLflow on Databricks, built by the original creators, gives you a full-featured, enterprise-ready MLOps platform with zero setup required.

Track experiments, version models, and deploy seamlessly. For GenAI, get automatic tracing, agent evaluation, and monitoring dashboards out-of-the-box—no infrastructure headaches.

Managed MLflow helps you ship reliable models to production, faster.

Get started at mlflow.org.

Curated finds to help you stay ahead

Multimodal agent design showcased through a project page introducing a memory-driven system for long-horizon reasoning, paired with a benchmark suite built around real-world and web-sourced video tasks.

NVIDIA’s Nemotron Nano 2 is a hybrid Mamba-Transformer reasoning model family offering high-throughput long-context inference, backed by a massive 6.6 trillion-token dataset for pretraining.

AI agent coordination for software projects, with a Claude Code–driven protocol that links GitHub Issues and worktrees into a spec-driven workflow with full task traceability.

Semantic layer introduction via DuckDB tutorial: demonstrates how to define metrics in YAML, connect with DuckDB and Python, and keep consistent business logic across analysis tools.

MLOPS COMMUNITY

Traditional vs LLM Recommender Systems: Are They Worth It?

LinkedIn’s feed is quietly changing as LLMs start replacing the hand-crafted features behind traditional recommenders. Arpita explains what this means for speed, accuracy, and the cold start problem.

Prompts over features - Instead of feeding the model curated signals, LLMs can infer them directly, putting the real skill in how you ask the question.

Speed vs quality - Full-size LLMs can be too slow for live feeds, so teams use distilled models or run them offline for feature generation.

Faster personalisation - LLMs can tailor content with minimal data, beating traditional models in early-user scenarios.

A smarter feed is possible, but only if your infra can keep up.

Knowledge is Eventually Consistent

When code is the only reliable source of truth, what happens to everything else engineers need to remember? Devin talked about agents that don’t just scrape knowledge but actually build and maintain it.

Fact-based reasoning - Dosu’s new agent commits verified “facts” into a knowledge base, cutting out duplicated searches and improving with each use.

Audience-aware responses - The agent adjusts its behavior depending on who’s asking - from expert maintainers to first-time users.

Knowledge maintenance at scale - By tying documentation back to code as the ultimate system of record, Dosu detects inconsistencies and prompts updates before docs go stale.

The bigger your system, the more brittle knowledge becomes - Dosu’s approach is a bid to turn that fragility into a self-reinforcing loop of truth.

AI Changed Stack Overflow for the Better

Trust in AI code assistants is falling fast - even as adoption soars. Stack Overflow’s latest developer survey shows usage climbing from 60% to 80% over three years, but trust dropping from 40% to just 29%

Why it matters: AI is great for boilerplate, but often fails on complex, context-heavy tasks. That’s where human-curated Q&A still outperforms.

Stack Overflow’s role: Their data now underpins most major LLMs, with official licensing deals after years of scraping battles.

Inside companies: Enterprises like Uber are plugging private Stack Overflow instances into agents, powering internal copilots with vetted, constantly updated answers.

The signal is clear: AI without trusted human knowledge hits a cliff.

What Does Multimodality Truly Mean For AI?

Multimodal AI is moving fast, but the real question is whether today’s systems can truly reason across the same streams of information humans use every day. This piece breaks down where progress is real and where the bottlenecks remain.

Models - From CLIP to Gemini, architectures are pushing toward any-to-any modality reasoning, though alignment and efficiency are still major challenges.

Processing - GPUs and custom accelerators have made real-time multimodal workloads feasible, but cost and optimization shape what’s deployable.

Data foundations - Embeddings alone flatten context; multimodal databases and knowledge graphs are needed to preserve structure and provenance.

The takeaway: models and hardware are advancing quickly, but data management will decide how far multimodal AI can really go.

IN PERSON EVENTS

Ahead of the Agent Builder Summit in San Francisco (Sept 4), we’re adding two practical, hands-on workshops to the mix. Both are designed for people who want to stop talking about agents and actually build them.

Here’s what’s on offer:

4 hours of building (10am–2pm)

Real projects you’ll take away and extend

Food, drinks, and plenty of builder energy

Direct access to experts who’ve solved these problems at scale

A small, focused group of practitioners

You can choose between:

Agents + Authentication: Learn how to bypass OAuth nightmares, connect LLMs to APIs more intelligently, and set up agents that actually run in production.

Voice AI Agents: Go from STT → LLM → TTS toy examples to production-ready voice systems, complete with deployment strategies, observability templates, and real-world design tips.

MEME OF THE WEEK

Job of the week

Sr. Engineering Manager - AI Observability Platform // Databricks (San Francisco, US)

Databricks is hiring a Senior Engineering Manager to lead development of its AI Quality Observability Platform. The role focuses on building systems for monitoring and evaluating GenAI applications at scale, driving reliability, and guiding product direction.

Responsibilities:

Lead product strategy for GenAI Quality Observability platform

Drive Agent Monitoring product from Beta to General Availability

Collaborate across teams to deliver scalable observability solutions

Engage with customers to refine monitoring and evaluation features

Requirements:

5+ years’ experience building AI or ML systems

Proven track record with highly available cloud services

Experience managing and scaling high-performance engineering teams

Strong knowledge of service-level objectives and system reliability

Find more roles on our new jobs board - and if you want to post a role, get in touch.

ML CONFESSIONS

Found In The Wild - Too Fast to Be Real

Share your confession here.