I know you’ve come up with more inventive ones than this during production.

PODCAST

Real-time Feature Generation at Lyft

Part of the problem with real-time is no one agrees what it means – until it breaks. Then everyone agrees it feels like forever.

At Lyft, real-time ML powers critical systems like surge pricing and demand forecasting. Rakesh explained how they moved from cron jobs to Apache Beam on Flink, cutting latency and enabling consistent one-minute feature updates. Geohash-based routing solved early hot shard issues and shaped their internal geospatial feature store:

Features are stored hierarchically (GH6 to GH4), so models can request region- or block-level data from a single source

A shared API supports different aggregation levels, improving reuse and simplifying scale across models

There might be debate on what real-time means, but no debate this episode’s a good time.

A Substack post arguing that AI agent benchmarks like WebArena are fundamentally flawed, with a proposed 43-point checklist to improve evaluation reliability.

A research post detailing efforts to optimise an open-source reasoning model down to ~3 ms per token latency, making it fast enough for interactive real‑time applications.

An AWS blog post introducing AgentCore, a new Bedrock platform for securely deploying and managing AI agents at scale, with support for custom frameworks, memory, identity, tool use, and observability.

A technical guide to running LLMs in production with BentoML, covering setup, batching, caching, performance tuning, and monitoring for both open-source and custom models.

PODCAST

Enterprise AI Adoption Challenges

You’d think we’d have figured out tokens by now. Unfortunately, this episode doesn't help with that, but it does share an approach to driving LLM adoption across a 100-company portfolio.

Toqan's team explained how they’re scaling by balancing advanced features for power users with intuitive entry points for everyone else. The real challenge isn’t technical. It’s getting people to trust and actually use the tools.

One tactic that’s helped build confidence is steering new users toward tasks that reliably succeed:

Prompted onboarding flows guide people to summarize docs or translate text, rather than just “ask anything”

Live demos and walkthroughs show how real teams are using Toqan to get work done

It's a great episode, so clicking below to listen isn't a token gesture.

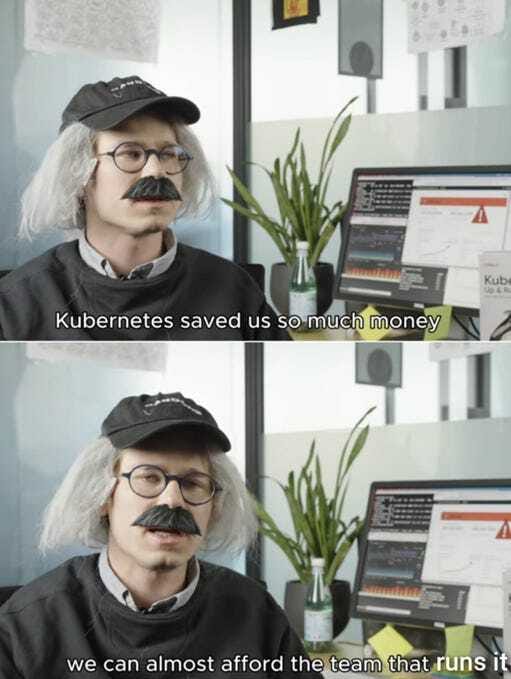

MEME OF THE WEEK

BLOG

Automating Knowledge Graph Creation with Gemini and ApertureDB - Part 1

Cmd+F helped you find a needle in your haystack, but AI’s now helping you pull out all the needles, screws, and paperclips, label each one properly, and store them neatly in drawers.

This tutorial covers how to extract and store structured entities from long documents using Gemini 2.5 Flash, LangChain, and ApertureDB. After defining entity classes and properties with Gemini, specific instances are pulled from a 42-page PDF in parallel, deduplicated, and inserted into ApertureDB.

Extraction is tightly structured using templated prompts and Pydantic schemas, including:

Class schema generation: Gemini maps general concepts like “Computing System” to relevant properties such as “definition” and “use cases”.

Efficient scaling: LangChain processes document chunks concurrently, then merges and cleans results before insertion.

You won't need Cmd+F to find the blog, just click below to read.

ML CONFESSIONS

When I started at a new place, they asked me to clean up the evaluation pipeline for this product classification model. The codebase had gotten pretty messy over time - lots of custom JSON configs, custom data loaders, and not a lot of documentation, so it took a while just to understand what was going on.

I ended up rewriting the dataset splitting logic to make it more readable, and I leaned on this utility function someone else had written a while back. It all seemed to work. Training ran smoothly, and the new model looked better in every way - better precision, better recall, just generally better numbers than what they had before.

A couple of sprints later I was writing tests and realised that the utility I’d used wasn’t actually doing a proper split, it was just shuffling the indices. So the train and test sets were basically overlapping, like, almost entirely.

No one caught it because the business team only cared about what happened in production, and performance there did improve, mostly because I’d unknowingly fixed a broken feature engineering step that had been skewing everything.

I left the code in place but added a warning in the README and a proper split in a draft PR. I never merged it. I don’t think I wanted to see what the numbers would look like.

Share your confession here.

HOT TAKE

Real-time ML is the new microservices: makes sense at scale, but most teams cargo cult their way into a mess they can’t debug.

Batch mindset or streaming mindset?